The "Green" Backend: How Rust Slashes Your Cloud Bills (and Carbon Footprint)

Are your soaring cloud infrastructure bills keeping you up at night? For CTOs and startup founders, the cost of scaling a backend can sometimes outpace revenue. But what if the solution wasn't adding more servers, but changing the core language you build with? Discover why the industry is shifting to Rust—the high-performance, memory-safe language that eliminates wasteful processes like "garbage collection." Learn how moving to a "green backend" built on Rust can help you handle the same traffic with 3x fewer containers, slashing both your operational costs and your carbon footprint.

Introduction: The Invisible Cost of Scale

When you’re a startup founder, a breakout month of growth is a cause for celebration. But for your CTO, it’s often a moment of panic. The sudden traffic spike means the "scaling alert" bells are ringing, and the automatic solution is always the same: provision more containers. Spin up more instances.

In an era of Node.js, Python, and Java, scaling out has become the default answer to scaling up. We’ve grown accustomed to a "software sprawl" that solves immediate performance issues by throwing hardware—and money—at the problem.

But in 2026, a new imperative is emerging. It’s no longer just about meeting demand; it’s about meeting demand sustainably and efficiently. The current model, while functional, is fundamentally wasteful, both economically and environmentally. The core culprit isn’t your user base—it’s the architectural choices embedded in your programming language.

It’s time to talk about the "Green Backend" and why the industry is embracing Rust to build it.

The Elephant in the Room: The "Garbage Collector" Tax

Most modern backend languages—including Java, Go, Python, and Ruby—rely on a mechanism known as a "Garbage Collector" (GC). A GC's job is to automatically manage memory, identifying and cleaning up data that is no longer being used by your application.

This sounds like a fantastic productivity feature, and for a decade, it was. But it comes with a hidden, heavy cost.

To do its job, a Garbage Collector must periodically "pause" your entire application to scan memory. These are often called "stop-the-world" events. Even if they only last for milliseconds, these pauses are an active waste of CPU cycles. More importantly, they make your application unpredictable. A sudden GC pause at the exact moment a critical request arrives can cause latency spikes that break your SLAs.

To compensate for this built-in inefficiency, you have only one option: over-provision. You allocate more RAM and more CPU cores than your app strictly needs, just so that when the GC kicks in, the service doesn't grind to a halt. You are literally paying your cloud provider to manage the waste generated by your own software.

Rust: Zero-Waste Architecture by Design

This is where Rust changes everything. Rust has no Garbage Collector.

Instead, Rust utilizes a groundbreaking system of "ownership" and "borrowing" that is validated at compile-time. The compiler itself determines exactly when memory should be allocated and freed, without requiring a separate runtime process to manage it.

The economic and technical impacts of this architecture are profound:

Zero "Stop-the-World" Pauses: Your Rust backend runs with absolute consistency. Every single CPU cycle you pay for is dedicated to serving user traffic, not managing internal memory cleanup. Latency becomes predictable.

Leaner Footprint: Without the GC overhead, Rust applications use a fraction of the RAM required by their Java or Go counterparts. A service that might require 1GB of RAM in Java could easily run in 100MB of RAM in Rust.

Maximum CPU Efficiency: Rust’s performance is comparable to C++. Your code is translated into highly optimized machine language, getting the absolute maximum work done per CPU cycle.

The Bottom Line: Handle 3x the Traffic on 1/3 the Hardware

Let’s translate "efficiency" into terms that a DevOps engineer or startup founder understands.

The current paradigm in Python (FastAPI/Django) or Java (Spring Boot) often means scaling your container orchestration (Kubernetes, etc.) based on memory or CPU usage that is artificially inflated by language runtimes.

If your Python app handles 1,000 requests/second but is consuming 80% of its allocated CPU due to interpreted language overhead and GC activity, you have to spin up a second container to handle any more load.

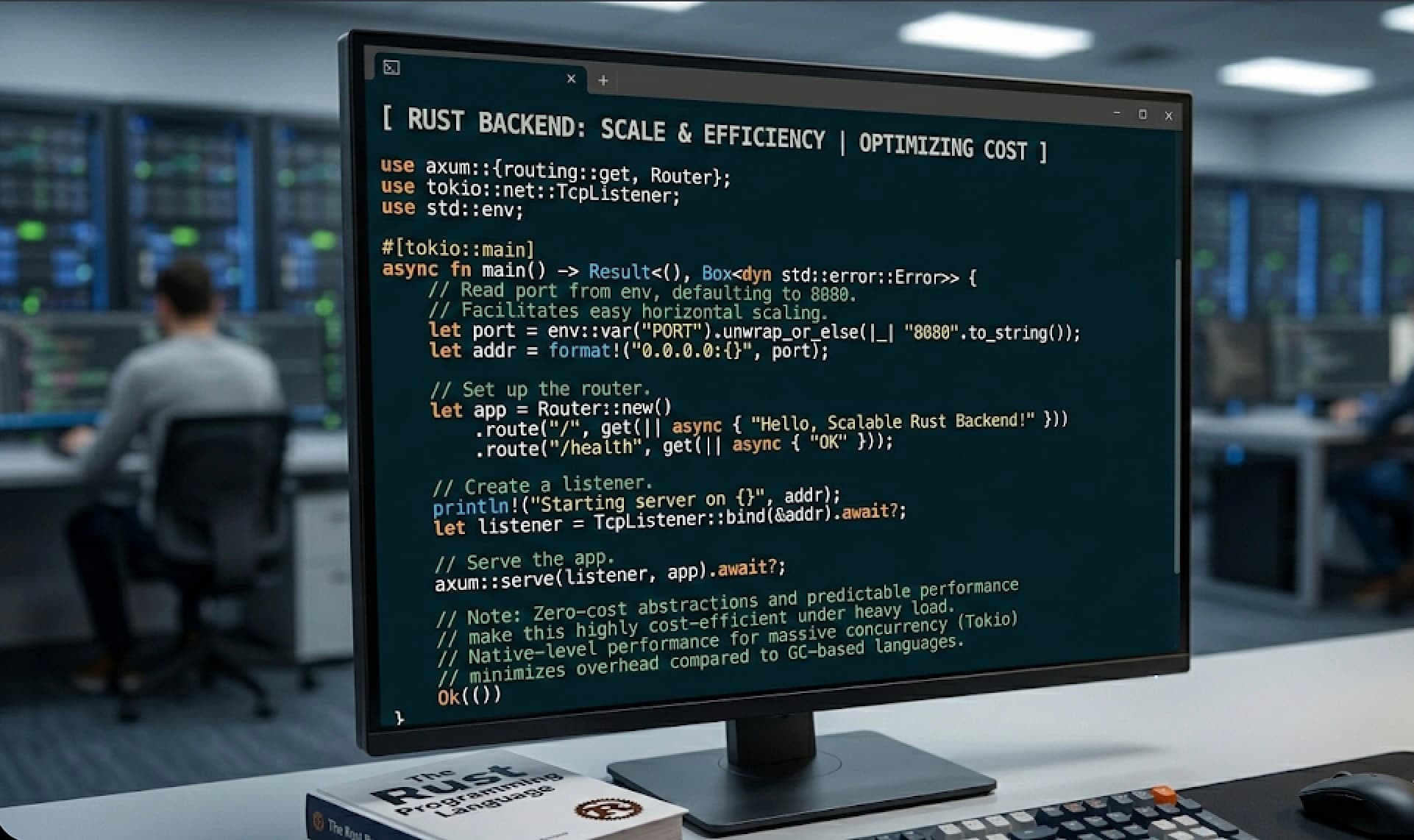

In Rust (using a framework like Axum or Actix), that same service, running on identical hardware, might be using only 20% of its CPU to handle the same 1,000 requests.

The critical shift is this: You can handle the same volume of user traffic with 3x fewer containers.

For a CTO, this is game-changing. It means you can potentially cut your container infrastructure bill by 60-70% without sacrificing a single user experience. For a scaling startup, that saved capital isn't just a number; it’s an opportunity to reinvest in product, marketing, or hiring.

Beyond the Budget: The Environmental Case for Rust

We cannot discuss efficiency without discussing sustainability. Every extra server rack you spin up, every extra CPU cycle you burn, translates to real-world carbon emissions.

The "Green" backend isn't just a metaphor for efficiency; it’s a necessary step toward responsible growth. Large-scale studies, including research from AWS and independent benchmarks, have consistently ranked Rust as one of the most energy-efficient programming languages, second only to C. In contrast, languages like Java, Go, and Python consume significantly more energy per unit of work done.

By choosing Rust, you aren't just saving money. You are making a conscious architectural decision to reduce the carbon footprint of your digital products.

The Conclusion: Efficiency as a Competitive Advantage

The "move fast and break things" era of computing is giving way to the era of "move fast and run lean." In a market where operational efficiency defines profitability, your backend language choice is now a strategic business decision.

Building with Rust has a steeper learning curve than Python, absolutely. But for critical, high-scale infrastructure, that upfront investment is paid back tenfold. The result is a system that is robust, incredibly fast, cheaper to operate, and better for the planet.

For the modern CTO or startup founder, the question is no longer "Why use Rust?" The question is "Can we afford not to?"

Navin Hemani

Author